Could be worse - Random topics in statistical physics, spin glasses, optimization and average case hardness

The Random Energy Model – Part I

28 December 2025 | E. Malatesta

Main definitions

The Random Energy Model (REM) is probably the easiest disordered system model with a non-trivial (spin glass) behaviour. It has been introduced and solved by B. Derrida (Derrida (1980)). It consists in having random independent energy levels each one being extracted from a Gaussian distribution, which I take for simplicity to be having zero mean and variance

In the following I will call by ''disorder'' the realization of the energy level samples.

Boltzmann-Gibbs distribution and thermodynamic quantities

Given an instance of the energy levels we assign to each of them a weight given by the Boltzmann-Gibbs distribution

where is the inverse temperature and the normalization constant is the so-called partition function

From the knowledge of we can compute the (intensive[1]) free energy of the model

The free energy in turn gives access to all thermodynamic quantities of interest. Denoting by the average with respect to the Boltzmann-Gibbs distribution (2) we can compute the energy

and the entropy by

Large limit and self-averageness

Computing the partition function of the REM is not an easy task. Indeed, at variance with standard non-disordered models of statistical mechanics, the Boltzmann-Gibbs distribution , and therefore also and are themselves random variables as they all depend on the realization of the energy levels.

Luckily, when we perform the thermodynamic limit , usually many simplifications arise, as the properties of intensive observables become independent of the disorder or the sample realization. In other words, the probability distribution of the free energy becomes peaked around its expected value. Formally, for any it holds that

where I have denoted by the average over disorder given in (1). An observable satisfying such property is called self-averaging in statistical physics; this is equivalent to the concept of concentration of a random variable in math. This means that does not appreciably fluctuate from sample to sample when is large.

We are interested in studying the properties of the Boltzmann-Gibbs distribution in the limit .

Solving the REM using the microcanonical ensemble

Instead of working out with the temperature fixed (i.e. in the canonical ensemble), it is much easier to solve the REM by fixing the energy , which in statistical mechanics is the so-called microcanonical ensemble. In this ensemble one aims to compute the entropy at fixed energy which is given by

where is the the number of configurations with energies in the interval . It can be written as

with

Note that is itself a random variable, being dependent on the particular realization of the energy levels.

Physical range of energies of the REM

Markov's inequality bounds the probability that the a non-negative random variable is strictly positive by its average value. In our case the random variable is also integer valued, so one finds

This is also called first moment bound. Note that is a Bernoulli random variable , where

having assumed a narrow window of energies that does not scale with . The average of over the realization of the energy levels is therefore simple to compute and gives

This quantity goes exponentially to zero when if with

From Markov's inequality one finds therefore that the probability of finding energy levels with is exponentially small in . For this reason from now on we will consider as the physical interval of energies to be[2]

In this range the average number of energy levels with energy , is exponentially large in .

Self averaging property of

I want to now show that in the large limit concentrates around its average value. This can be shown by computing its variance:

where in the last step I have neglected exponentially small corrections in . A simple application of Chebyshev's inequality gives

that the random variable converges in probability exponentially fast in to its expected value.

This implies that average microcanonical entropy is given by

Note that this expression does not depend on the coarse-graining of the energy .

The free energy

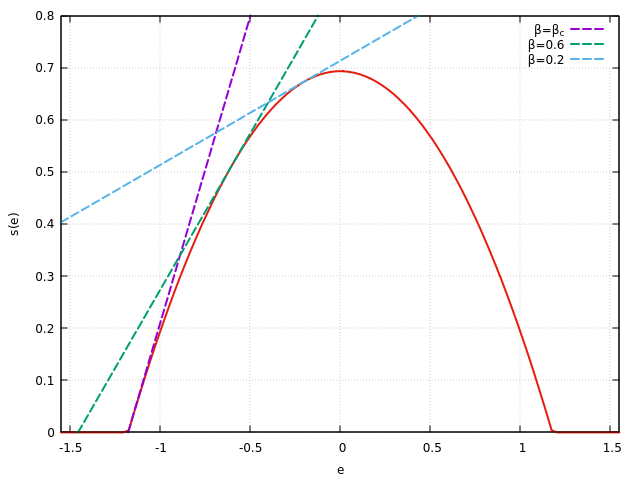

Having found the microcanonical entropy, I now wish to find the free energy of the model which requires the inverse temperature to be fixed. This can be obtained by the so called Legendre transform which is the way one passes from one ensemble to another in statistical mechanics. For the reader not familiar with changes of ensemble this is actually obtained by grouping configurations by their energy density and then integrating over all possible (allowed) energies . Sending the coarse-graining to zero (after the limit ) one finds

For large the integral is dominated by the maximum of the argument of the exponential (Laplace's method), that is

The maximization imposes

This is valid only if lies in the interval of allowed energy densities . The corresponding free energy being

If is too large (i.e. the temperature too low), the maximum of (20) is given by . This holds when

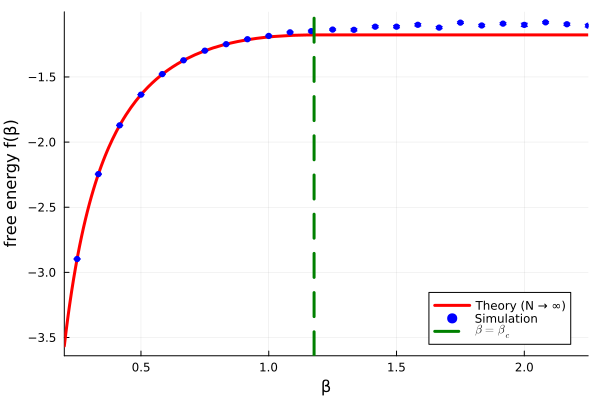

In this regime the free energy is constant. Summarizing the free energy of the REM is

This shows that at the model exhibits a second order phase transition as the derivative of is continuous at , but its second order is not. This phase transition has different names in the literature: ''freezing'', ''condensation'' and ''random first order'' (RFOT) phase transition. The first two names refers to the fact that the system ''freezes'' or ''condenses'' into the states having lowest available energies, i.e. .

The last name refers to the fact that while the transition is of second order in the classical sense, it discontinuous in terms of the order parameter. We have not introduced the order parameter in the REM model, but we will probably analyze it in a later post. Here I only anticipate the fact that at the Boltzmann-Gibbs measure in eq. (2) starts being dominated by subexponential number of configurations. This can be indeed verified by computing the entropy of the REM as a function of the temperature

Numerical checks

Below I show a simple code that computes numerically the quenched average of the free energy of the REM by sampling independent Gaussian energy levels (with ).

using Random, Statistics, Plots, LaTeXStrings

"""

Robust implementation of logsumexp

"""

logsumexp(x) = (m = maximum(x); m + log(sum(exp.(x .- m))))

"""

One-sample REM free energy

"""

function rem_free_energy_sample(N::Int, β::Float64)

M = 1 << N

σ = sqrt(N)

E = σ .* randn(M)

logZ = logsumexp(- β .* E)

return - logZ / (β * N)

end

"""

Averaged REM free energy in the limit N → ∞

"""

function rem_free_energy_theory(β::Float64)

βc = √(2log(2))

if β <= βc

return - log(2) / β - β / 2

else

return - √(2log(2))

end

end

"""

Computes the average over `nsamples` of the REM free energy

for the values of β contained in the vector `βs`

"""

function simulate_curve(N::Int, βs::Vector{Float64}; nsamples::Int=30, seed::Int=23)

if seed > 0

Random.seed!(seed);

end

means = Float64[]

stderrs = Float64[]

for β in βs

vals = [rem_free_energy_sample(N, β) for _ in 1:nsamples]

push!(means, mean(vals))

push!(stderrs, std(vals) / √nsamples)

end

return means, stderrs

end

"""

Generates a plot comparing theory vs simulations

"""

function plot_rem_free_energy(; N=18, βmin = 0.25, βmax = 2.25, nβ=25,

nsamples=30,

seed=-23,

outfile="rem_free_energy.png")

βc = √(2log(2))

βs = collect(range(βmin, βmax, length=nβ))

f_sim, f_err = simulate_curve(N, βs; nsamples=nsamples, seed=seed)

plot(rem_free_energy_theory, xlim=(βmin-0.05, βmax);

label="Theory (N → ∞)",

linewidth=3, color=:red,

xlabel="β", ylabel="free energy f(β)",

)

scatter!(βs, f_sim;

yerror=f_err, color=:blue, markerstrokecolor=:blue,

label="Simulation",

markersize=3.5

)

vline!([βc]; label=L"\beta=\beta_c", linestyle=:dash, linewidth=3, color=:green)

savefig(outfile)

end

plot_rem_free_energy(N=18, nsamples=30, outfile="./_assets/images/blog/rem_free_energy.png")The results are summarized in the figure below. The numerical results reproduce the exact free-energy curve and its freezing transition at .

| [1] | i.e. scaled with . |

| [2] | Note that the value of the energy can be obtained by asking: given normal Gaussian variables with mean zero and variance , what is the typical value attained by the maximum among those? This can be obtained by imposing that the probability of observing the random variable to be large than a (large) value is comparable to Taking logs one finds at the leading order in Using and rescaling with one gets the result. By symmetry and a similar argument one also can get the value by studying the minimum instead of the maximum. |

References

[1] Derrida, Bernard, "Random-energy model: Limit of a family of disordered models", Physical Review Letters 45.2 (1980): 79.